The Shape of the Thing

Where we are right now, and what likely happens next

In October of 2023, I wrote about the “Shape of the Shadow of the Thing,” speculating on the Thing that AI might turn into in the coming years. I think we can see the Thing much more clearly now, and some of the consequences that come with it. As I have been discussing in recent posts, we have entered a new phase of AI. After ChatGPT was introduced, human-AI work took the form of what I called co-intelligence, where humans would prompt AI back-and-forth to get help on tasks. Starting in late 2025, we entered a new era thanks to AI agents like Claude Code, OpenAI’s Codex, and OpenClaw. These are AI systems that you can just give work to, sometimes hours of human work, and get back reasonable and useful results in minutes. This is an era of managing AIs, rather than working with them.

This new approach to AI is the outcome of the rapid exponential improvement in AI abilities. That means you can’t understand where we are, and where we might be going, without understanding the increasing capability of AI.

Riding up the Exponential

Exponential improvements are hard to visualize, so rather than charts or graphs, I want to start with otters. If you have followed my writing on AI, you know about my Otter Test, where I challenge various AI image models to show a picture of an “otter on a plane using wifi.” As you can see below, the progress from 2022 (the year ChatGPT launched) to 2025 was rapid and remarkable.

So, what has happened in the time since that April, 2025 image? With nearly perfect images, video has become the new frontier and has also seen exponential gains. To demonstrate, I gave the most advanced (and still unreleased in the US) AI video model from TikTok maker Bytedance, the prompt: A documentary about how otters view Ethan Mollick's "Otter Test" which judges AIs by their ability to create images of otters sitting in planes. This is the very first result — definitely turn on your sound:

Aside from a single pronunciation mistake, this is pretty perfect, down to the fact that the otters are animated to have human-like expressions. Of course, video models are cool, but they are not necessarily indicative of what useful agentic AI can do. So, what if we look at the benchmarks of AI ability, do we see the same exponential curve?

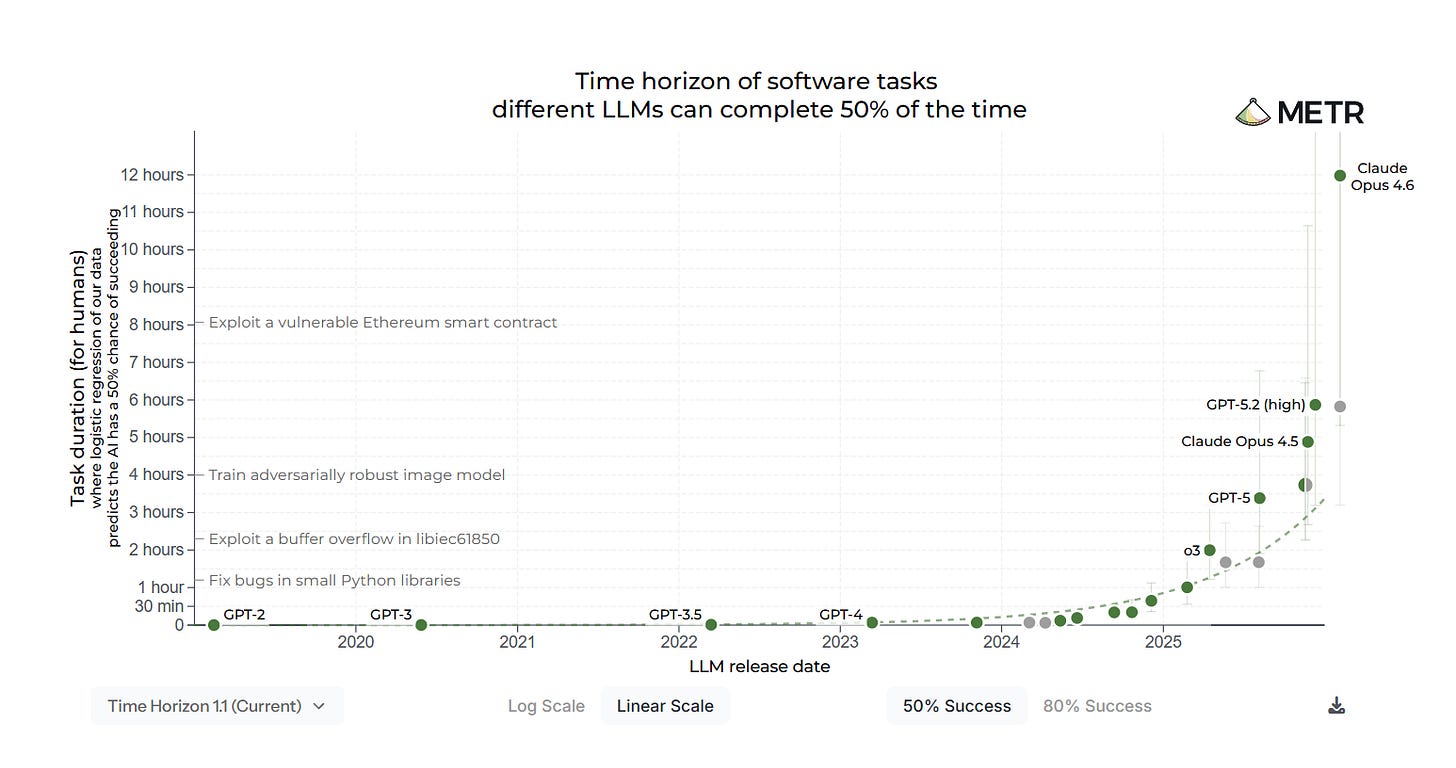

We certainly do in the most famous evaluation in AI today, the METR Long Tasks graph. It tries to measure AI progress by seeing how much human work an AI can complete autonomously with some measure of reliability. It has attracted its share of critics, and even METR has pointed out potential issues. But if you don’t like the METR graph, you will find most graphs of AI ability have that same curve.

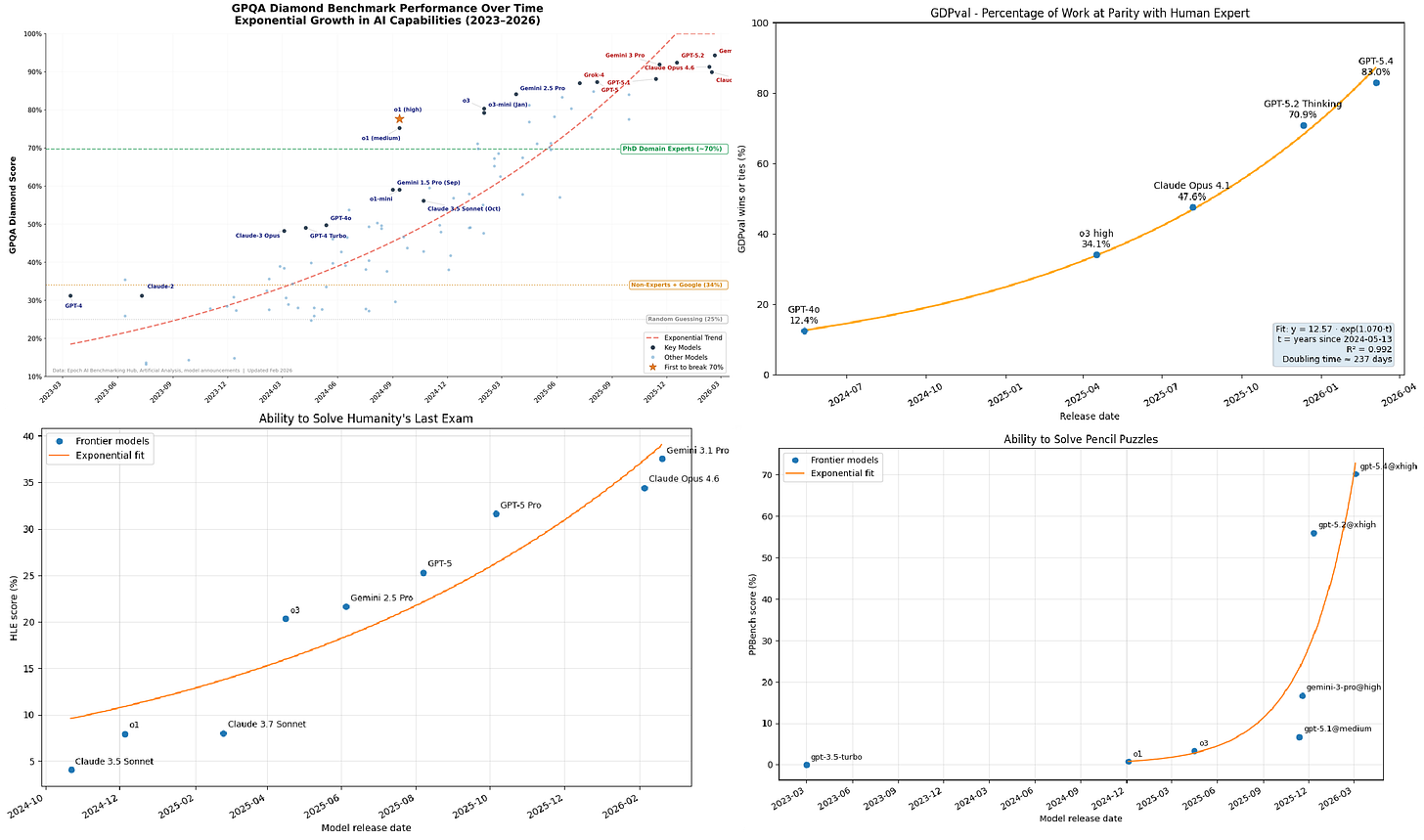

As an example, I picked four hard and diverse AI tests and graphed progress over time in the image below. In the upper left are the scores on the Google-Proof Q&A benchmark, a test of knowledge where graduate students using Google only score 34% outside their field and 70% or so inside of it, but the best AIs now score 94%. Or look at GDPval, where industry experts judge AI versus experienced human performance on complex tasks, and where the latest AIs now reach or exceed parity with top-performing humans 82% of the time. The same pattern holds for Humanity’s Last Exam, a set of very hard problems written by college professors that require considerable expertise to answers. Or we can even use the ability of AI to solve puzzles (you can try the puzzles here, they are fun!). Each shows a similar rapid gain in ability with few signs of slowdown, at least until they reach the top possible score on the test.

Exponential graphs aside, it is important to recognize that all of these tests have their own flaws, and that AI remains jagged, capable of some tasks at a high level, while messing up others. Further, despite these amazing capabilities in tests, companies are still very early in adopting AI, meaning that, as of yet, remarkably little has changed in most organizations. But “most organizations” doesn’t mean every organization. We are already starting to see the first appearances of new approaches to organizing that take advantage of the new abilities of AI agents.

Radical Changes to Work

A few weeks ago, a three-person team at StrongDM, a security software company focusing on access control, announced they had built a Software Factory — a way of working with AI agents that relied entirely on the AI to write, test, and ship production software without human involvement. The process included two (quite radical) rules: “Code must not be written by humans” and “Code must not be reviewed by humans.” To power the factory, each human engineer is expected to spend amounts equivalent to their salary on AI tokens, at least $1,000 a day.

The basic idea of the Factory is that it takes future product roadmaps, written by humans, and turns those into products. Coding agents use those roadmaps to build software while testing agents try out the software in a simulated customer environment (which the testing agents build as needed). The sets of agents provide feedback to each other, looping back-and-forth until the results satisfy the AI. Then humans review the finished product and the results are shipped to customers without anyone every touching, or even seeing, the underlying code.

There are obviously a lot of details here that make this approach work, and the StrongDM team has shared a lot of them publicly. They also invited in some smart outside observers to watch the Factory in operation and comment on what they saw, so you can read the accounts of Simon Willison and Dan Shapiro to get a better sense of the strengths and weaknesses of their approaches. In many ways, however, the particular details of the Software Factory matter less than the fact that such radical experimentation into how we work is now not only possible, but likely necessary. AI is good enough to change how organizations operate, and the experimentation is just getting started, even as models continue to improve.

Rolling Disruption

Practical agents, jagged exponential improvement, and the ability to radically experiment with the nature of work combine to form a sort of rolling and unpredictable environment for AI advances. As AI capability crosses thresholds, it unlocks radical new use cases that change people’s views, sometimes overnight, about what AI can do. At the same time, organizations experimenting with AI will figure out how to make it work for them, leading to sudden announcements about new strategies or large-scale shifts in which kinds of employees companies value most. Plus, as AI continues to improve, more policymakers will become interested in AI governance, creating conflicts with AI companies.

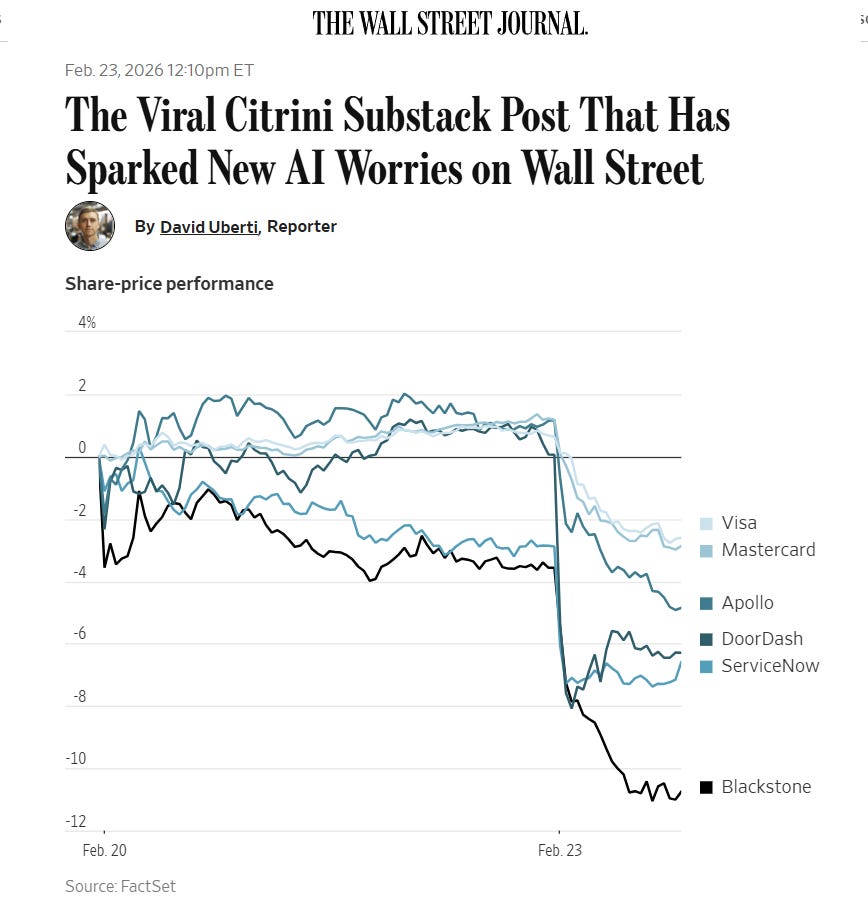

This isn’t speculation because we saw this all happen in a single week. On February 22nd, a little-known financial firm, Citrini Research, published a fictional scenario about how AI adoption might destroy a number of established businesses by 2028. There were many elements in the piece that were clearly farfetched, but it struck a nerve on Wall Street, leading to major stock market price shifts. On February 26, financial services company Block announced 40% layoffs, implying this was due to AI. It is likely that the role of AI was greatly exaggerated, and AI was merely used as cover for large-scale layoffs. And then, to cap off the week, on February 27 a very public conflict occurred between the Pentagon and AI company Anthropic over who should be able to control the rules for how Claude could be used by the government.

In a lot of ways, each of those cases were not what they first appeared to be. The Citrini report was a fictional scenario, the Block layoffs were not about AI, and the conflict over AI at war revolved around a number of complicated issues that are still not completely clear. But I think that single week is a good illustration of what the near future will feel like. Sudden revelations about AI capability leading to rapid market reactions. Increasingly real impacts of AI on jobs (even if there is a lot of debate over whether those impacts will be good or bad in the short term). And increasing entanglement between AI companies and policymaking around the world. As the stakes go up, it is likely things will feel even more unstable.

It is possible, of course, that things settle down. Maybe AI improvement hits a wall, organizations absorb the changes gradually, and the rolling disruptions become more manageable as people learn what AI can and can’t do. History is full of technologies that were supposed to change everything overnight but instead took decades to fully reshape the economy.

But I wouldn’t bet on it.

One reason is that AI companies are telling us, fairly explicitly, what comes next: recursive self-improvement, or RSI. This is the idea that AI systems are increasingly being used to build better AI systems, creating a feedback loop that could accelerate the very curves I showed you above. At Davos in January, Anthropic’s Dario Amodei explained that if you make models that are good at coding and good at AI research, you can use them to build the next generation of models, speeding up the loop. He noted that engineers within Anthropic barely write code themselves anymore. When OpenAI released its latest Codex model in February, the company stated it was “our first model that was instrumental in creating itself.” And Google DeepMind’s Demis Hassabis acknowledged at the same Davos panel that closing the self-improvement loop is something all the major labs are actively working on, even as he warned there are still missing capabilities and real risks.

We don’t know how far this goes. RSI has been a theoretical concept for decades, and the labs may hit bottlenecks, whether in compute, in data, or in the sheer difficulty of AI research. We also don’t know whether LLM-based AIs will eventually hit a ceiling where they cannot get any better, or where the jagged frontier never smooths out. I don’t think we know anything for certain, but I also think we are past the point where recursive self-improvement is science fiction. Instead, it is an explicit item on the roadmap of every major AI company. If the loop does close, the exponential curves we’ve been watching would get steeper, with an uncertain endpoint.

So here is where we are today: the instability of that single week in February was a preview of what it feels like when the increasing ability of AI starts to interact with markets, jobs, and governments all at once. That feeling of uncertainty will likely only spread further. But uncertainty is not the same as helplessness. When a technology is this powerful and this unsettled, the choices that individuals and organizations make right now matter more. We can see the shape of the Thing now, but we can still influence the Thing itself, and what it means for all of us. We clearly don’t have rules or role models for how AI gets used at work, in schools, or in government. That’s a problem, but it also means that every organization figuring out a good way to use AI right now is setting a precedent for everyone else. The window to shape the Thing may not last long, but it is here now.

What jumps out is how quickly 'managing AIs' becomes another room where we're expected to be coherent and composed before we've built any shared norms for doing that. We're reshaping work structures faster than we're reshaping the stories people tell themselves about their own agency inside those structures. That gap, the curve outpacing the human narrative, is where a lot of the coming whiplash will live. Great insights!

"Uncertainty is not the same as helplessness" Amen. From our research: what paralyses people about AI isn't the tool(s) or hockey sticks, it's the unresolvable consequences. And the antidote isn't expertise or seriousness; it's curiosity. Play turns uncertainty from a threat into a threshold. There's a professional case for fun that most organisations haven't figured out yet, or are tripping up on trying to take all this seriously!