Reshaping the tree: rebuilding organizations for AI

Technological change brings organizational change.

I want to talk about the future of organizations, but to do that, we need to start with their past. In fact, I want to start as far away from AI as possible, with the New York and Erie Railroad of 1855. Stick with me, I promise it will make sense in a paragraph or two.

The railroad faced a huge new technological and organizational problem: how do we organize work across a huge geographic distance with a huge number of employees, all while maintaining centralized control and planning? Using the technology of the time, the telegraph, executive Daniel McCallum came up with a solution: the world’s first organizational chart.

The chart, long-lost before being re-discovered by Caitlin Rosenthal is a thing of beauty. Drawn as a plant, with branches and leaves, it shows both geography (each rail line and station are indicated) and organizational structure, each employee is indicated, down to leaves for each wiper or engineman. If you remove those gorgeous touches, replacing the organic forms with lines and boxes, and flipping it on its head, you get the modern organizational chart, remarkably unchanged for a century and a half.

Each new wave of technology ushered in a new wave of organizational innovation. Henry Ford took advantage of advances in mechanical clocks and standardized parts to introduce assembly lines. Agile development was created in 2001, taking advantage of new ways of working with software and communicating via the internet, in order to introduce a new method for building products.

All of these methods are built on human capabilities and limitations. That is why they have persisted so long - we still have organizational charts and assembly lines. Human attention remains finite, our emotions are still important, and workers still need bathroom breaks. The technology changes, but workers and managers are just people, and the only way to add more intelligence to a project was to add people or make them work more efficiently.

But this is no longer true. Anyone can add intelligence, of a sort, to a project by including an AI. And every evidence is that they are already doing so, they just aren’t telling their bosses about it: a new survey found that over half of people using AI at work are doing so without approval, and 64% have passed off AI work as their own.

This sort of shadow AI use is possible as LLMs are uniquely suited to handling organizational roles — they work at a human scale. They can read documents and write emails and adapt to context and assist with projects without requiring users to have specialized training or complex custom-built software. While large-scale corporate installations of LLMs may add some advantages, like integration with a company’s data (though I wonder how much value this adds), anyone with access to GPT-4 can just start having AI do work for them. And they are clearly doing just that.

What does it mean for organizations when we acknowledge that this is happening? We have the same challenge Daniel McCallum had 150 years ago: how to rebuild an organization around a fundamental shift in the way work is done, organized, and communicated. I don’t have the answers to how to do this yet. Nobody does. But I can give you a preview of what it might look like.

Rebuilding a process

I help lead Wharton Interactive, a small internal software startup inside of Wharton devoted to transforming education through AI-powered simulations. Unsurprisingly, we embraced the power of LLMs early on. Because we have been careful about building an organization with a culture of exploration, we do not have a Secret Cyborg problem and everyone has been very willing to share their uses with the rest of the team. So I know that our customer support team uses AI to generate on-the-fly documentation, both in our internal wiki and for customers. Our CTO taught the AI to generate scripts in the custom programming language we use (a modified version of Ink, a language for interactive games). We use it to add placeholder graphics, to code, to ideate, to translate emails for international support, to help update our HTML in our websites, to write marketing material, to help break down complex documentation into simple steps, and much more. We have effectively added multiple people to our small team, and the total compensation of these virtual team members is less than $100 a month in ChatGPT Plus subscriptions and API costs.

But, in many ways, this is just the start. Because what we are starting to think about is how to completely change processes: how to cut down the organizational tree and regrow it. Doing so will involve changing a lot about how we are used to working. Take, for example, the process we use when designing a new feature for our core teaching platform, like a screen that gives feedback on game progress for our Entrepreneurship Game.

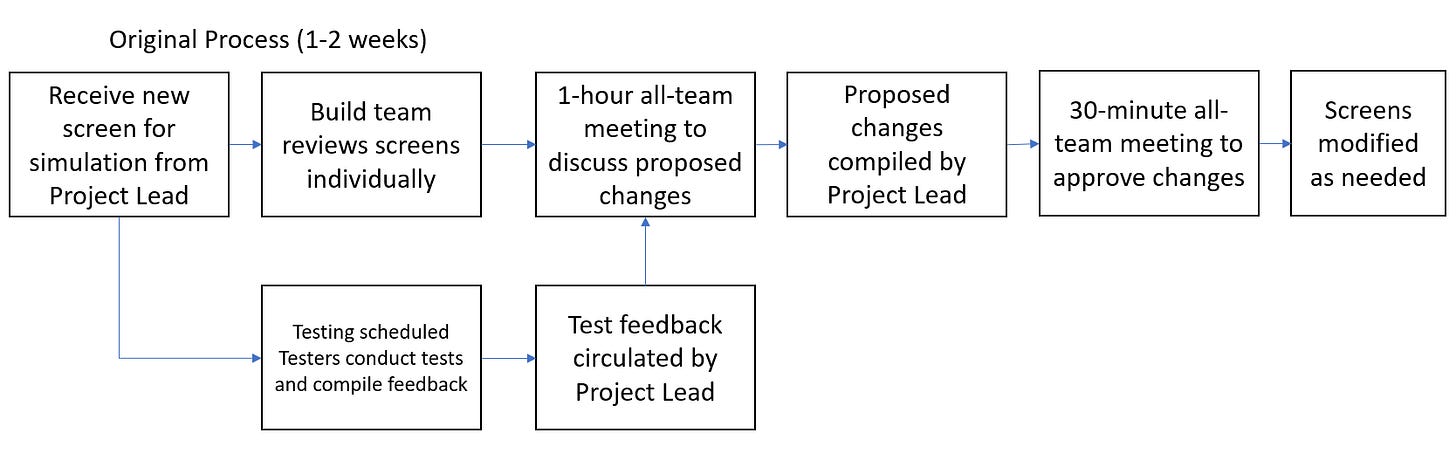

Since nobody has built the kinds of complex educational games we are developing, we have to do a lot of fundamental thinking - how do we display scores for many different learning objectives? How do we keep a feeling of experimentation when people are being graded on their results? How do we translate abstract game decisions into points? Designing a feature is a complicated and iterative process, that, if written down, would look something like this:

This process involves multiple meetings and lots of time and energy. But how could we redesign this to incorporate AI as not just a tool, but also as an “intelligence” that can add to the process, not just automate it?

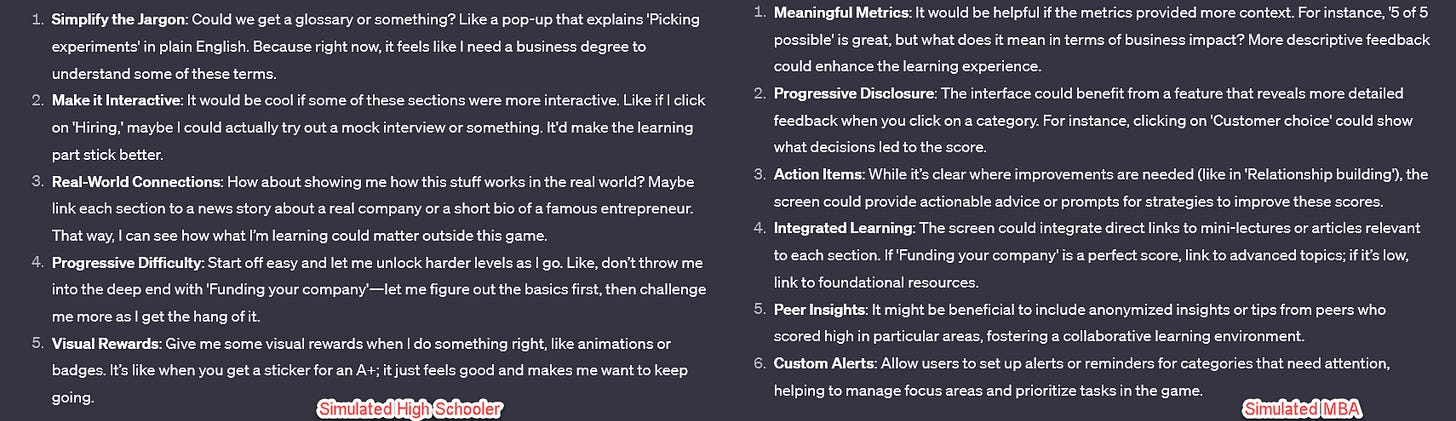

Well, one thing that AI is quite good at doing is providing feedback. We know from research that you can get reasonably good simulated feedback from AI personas - not as accurate as a real person, of course, but good enough for an interim step. So, let’s give our testers a chance to focus on more important tasks and have the AI do a first pass at user feedback. I pasted in the screen shot you saw above and wrote: you are an 1st year MBA student playing the entrepreneurship game created by Wharton Interactive. This is the grading screen you see part way through the game. give me your reactions and ways to improve it, given your perspective. Do it in character. (Then I asked for the same from a high school perspective). Not too bad as a way of getting some initial reactions.

What else can we change in our process? Let’s move on to that first one-hour meeting, where we go over all the external and internal feedback as a group. Now, meetings are important ways to achieve consensus and generate ideas, but pretty bad places to gather and collate information. What if we can reduce that meeting down from an hour by having the AI do the synthesis for us? Everyone can just use an AI voice transcription service to provide their feedback. I simulated elaborate feedback from team members Alice, Bill, and Carol, and asked GPT-4 to compile all of the results of proposed changes in a table. Now, the Project Lead can review this document (the AI still makes mistakes) and modify it, creating an agenda for the shortened meeting.

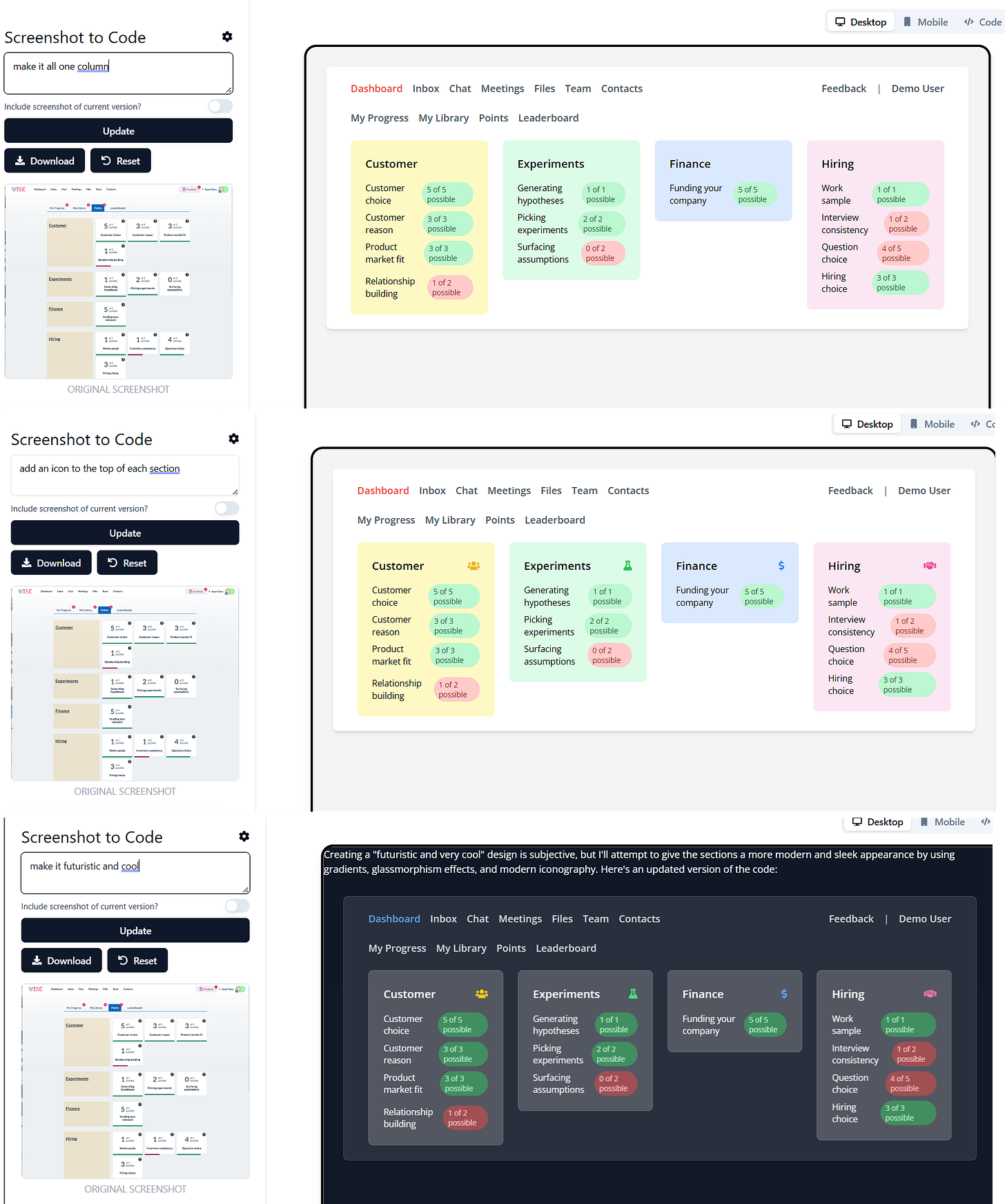

But the AI can go further. I asked it to create sample HTML pages for illustrating possible changes, just so these would be easier to visualize our options. And the meeting itself can focus on building, not just planning. Instead of a long meeting compiling information, we can use one of a large number of free tools that use GPT-4 to create HTML prototypes on the fly from screenshots and drawings (here I used the free screenshot-to-code). We aren’t just meeting, we are instantly able to ask for changes and see a prototype of the results, even without coding experience: make it one column, add icons, make it look more futuristic, etc. And these are just off-the-shelf projects built with GPT-4. Imagine what the AI models in coming months will do.

During this entire process, the AI is, of course, recording the meeting and giving us take-aways and do-todos at the end. This is a feature already included in Microsoft Teams Co-pilot, and will, I am sure, be coming to other conference software soon. After the meeting, our developer can not only ask the AI about points raised in the meeting, but they also get a working prototype they can use to build from. And, of course, they are getting AI assistance in actually implementing the prototype, adding more speed.

So, even with today’s tools, we can radically change our process. Theoretical discussions become practical. Drudge work is removed. And, even more importantly, hours of meetings are eliminated and the remaining meetings are more impactful and useful. A process that used to take a week can be reduced to a day or two.

And this is not even the most imaginative version of this sort of future. We already can see a world where autonomous AI agents start with a concept and go all the way to code and deployment with minimal human intervention. This is, in fact, a stated goal of OpenAI’s next phase of product development. It is likely that entire tasks can be outsourced largely to these agents, with humans acting as supervisors.

How to rebuild organizations

Even without agents, AI is impacting organizations, and managers need to start taking an active role in shaping what that looks like. Like everything else associated with AI, there is no central authority that can tell you the best ways to use AI - every organization will need to figure it out for themselves. I would like to propose a few principles, however:

Let teams develop their own methods. Given that AIs perform more like people than software (even though they are software), they are often best managed as additional team members, rather than external IT solutions imposed by management. Teams will need to figure out their own ways to use AI through ethical experimentation, and then will need a way of sharing those methods with each other, and with organizational leadership. Incentives and culture will need to be aligned to make this happen, and guidelines will need to be much clearer for employees to feel free to experiment.

Build for the oncoming future. Everything I have shown you is already possible today using GPT-4. But if we learned one thing from the OpenAI leadership drama, it is clear that more advanced models are coming, and coming fast. Organizational change takes time, so those adapting processes to AI should be considering future versions of AI, rather than just building for the models of today.

You don’t have time. If the sort of efficiency gains we are seeing from early AI experiments continue, organizations that wait to experiment will fall behind very quickly. If we truly can trim a weeks-long process into a days-long one, that is a profound change to how work gets done, and you want your organization to get there first, or at least be ready to adapt to the change. That means providing guidelines for short-term experimentation, rather than relying on top-down solutions that take months or years to implement.

I am not sure who said it first, but there are only two ways to react to exponential change: too early or too late. Today’s AIs are flawed and limited in many ways. While that restricts what AI can do, the capabilities of AI are increasing exponentially, both in terms of the models themselves and the tools these models can use. It might seem too early to consider changing an organization to accommodate AI, but I think that there is a strong possibility that it will quickly become too late.

Terrific post. It’s exciting to see people doing more than just generating silly images and fake term papers.

Your first principle suggests that one consequence of rebuilding organizations along these lines is that managers will have less power. Conflicts over remote work and recent successes by labor unions are other signs of a general realignment between managers and workers, especially knowledge workers. What other signs we should watch out for in terms of organizational or economic restructuring?